Beyond C++

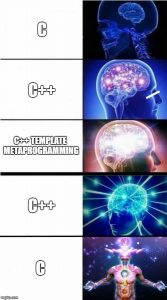

Some while ago, two of my colleagues were putting effort into our main code base and build system, to migrate to Visual Studio 2017, and the C++17 standard. Admirable and sensible. Of course, that was reason enough for another colleague of mine and myself to joke around about downgrading our code base to C++03 or C++98 or maybe even downright to C. Don’t worry, we all four were laughing. (Or were we?)

Some while ago, two of my colleagues were putting effort into our main code base and build system, to migrate to Visual Studio 2017, and the C++17 standard. Admirable and sensible. Of course, that was reason enough for another colleague of mine and myself to joke around about downgrading our code base to C++03 or C++98 or maybe even downright to C. Don’t worry, we all four were laughing. (Or were we?)

At that time, my joke-buddy pointed me to a blog post by aras-p about Modern C++ Lamentations. Read it! It’s worth it. And don’t go, “that’s maybe in gaming industry. Doesn’t apply to my work.” Well, I am not working in gaming industry. You know what: It does apply to my work pretty much 100%.

In my opinion, “modern” C++ is too complex, too bloated, too much of a poser for “look I can do cool code”, and misses the point of solving problems.

[…] to me this feels like someone decided that “Perl is clearly too readable, but Brainfuck is too unreadable, let’s aim for somewhere in the middle”.

Many language features are valid, other as just “cool.” Now, of course, I understand, that different people will find different parts of the language good. There are some aspects, however, which are objectively bad. Look at compile times and debug times mentioned in this article. At least those make a very valid point.

C++ compilation times have been a source of pain in every non-trivial-size codebase I’ve worked on. […] Yet it feels like the C++ community at large pretends that is not an issue, with each revision of the language putting even more stuff into header files, and even more stuff into templated code that has to live in header files.

I have been a hobby programmer in school; was a part time programmer while being a student of software engineering; made my Ph.D. in computer science on computer graphics and visualization, while writing a large-scale modular, high-performance visualization software; worked as senior software developer in a company; and I am now manager of a team of software engineers. I think it is valid to say, I have been programming almost my whole life. I still try to do some minor improvements or bug fixes, even as a manager. Most likely me team is thinking I should stop messing in “their code.” I won’t. My point is:

I have been programming almost my whole life. And I did it in more than a dozen different programming languages. (While writing this I counted 15, not including scripting languages. But most likely I forgot some.) Given this experience, let me say this:

C++ is not the best programming language. In modern C++ not everything has improved.

Please! Start (again) thinking “How do I solve this problem,” and not “How do I solve this problem with variadic templates wrapped in lambdas with ranges because they are so cool.”

While I was lecturing at the university on C++ for computer graphics, clear as daylight, you can see the different types of uprising programmers. And there is this specific sub-type of “programming artists.” Programmers, who think their source code is art and above and beyond trivial programs others do. I will not comment on those any further. But I noticed, in the field where C++ is used, especially so-called modern C++, those guys are seen pretty often! Sad.

As a closing note: Nowadays, when I start a project and think about which programming language(s) to use, C++ is not on the top of the list anymore.

I am a visual guy. That means I like all kinds of visual representations of abstract data. And so, of course, I also like all those fancy little badges available all over the internet. It is only a logical conclusion that I use those as well.

I am a visual guy. That means I like all kinds of visual representations of abstract data. And so, of course, I also like all those fancy little badges available all over the internet. It is only a logical conclusion that I use those as well. GLM

GLM gflags (static)

gflags (static) LibYAML

LibYAML

I will not continue with my game idea project “SpringerJagd”.

I will not continue with my game idea project “SpringerJagd”. As can be read on the internet: HtmlAgilityPack is not for beautiful, aka human readable, html files.

As can be read on the internet: HtmlAgilityPack is not for beautiful, aka human readable, html files. And here is another Nuget package:

And here is another Nuget package: